Performing Global Sensitivity Analysis

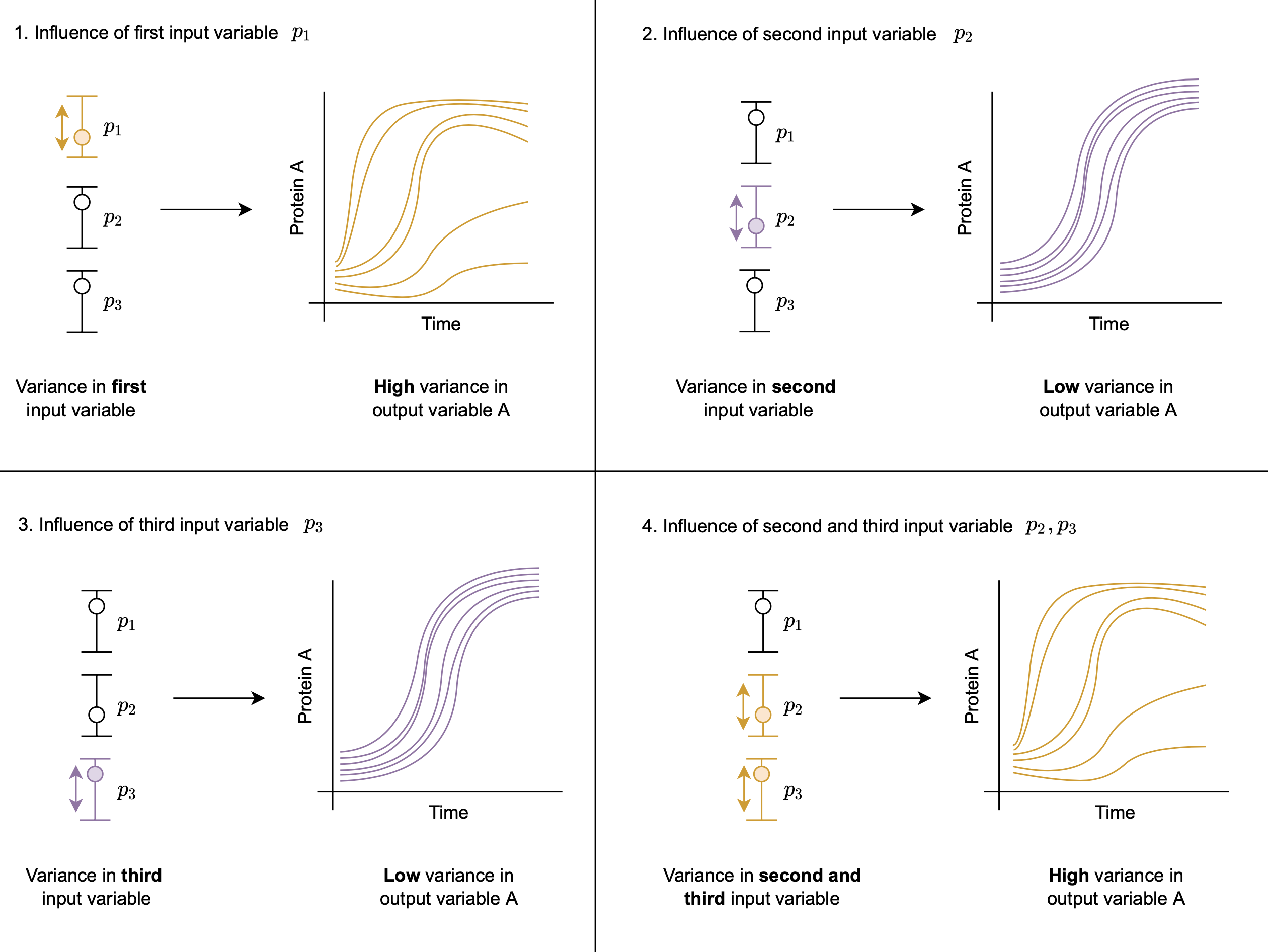

DyadModelOptimizer helps users to consider additional model exploration based on Global Sensitivity Analysis (GSA). This approach is helpful to further investigate how the model output reacts to variance in the model input. For example, we can analyse quantitatively how the variance in single input variables and how combinations of variances in several input variables affect output variables as shown in the figure below:

- Influence of first input variable,

- Influence of second input variable,

- Influence of third input variable,

- Influence of second and third input variable.

The GlobalSensitivity package provides a large variety of algorithms via gsa(f, method). The function that is used to compuite the sensitivity can be based on simulate, so that one can take advantage of already specified experiments. [1]

For example let's consider the predator prey model and perform GSA using the Morris method.

using DyadModelOptimizer

using GlobalSensitivity

using Statistics

using OrdinaryDiffEq

using ModelingToolkit

import ModelingToolkit: D_nounits as D, t_nounits as t

using Plots

function lotka()

@variables x(t)=3.1 y(t)=1.5

@parameters α=1.3 β=0.9 γ=0.8 δ=1.8

eqs = [

D(x) ~ α * x - β * x * y,

D(y) ~ -δ * y + γ * x * y,

]

@named sys = System(eqs, t)

end

sys = complete(lotka())

tspan = (0.0, 10.0)

experiment = Experiment(nothing, sys;

tspan,

saveat = range(0, stop = 10, length = 200),

alg = Tsit5()

)

prob = InverseProblem(experiment,

[

sys.α => (1.0, 5.0),

sys.β => (1.0, 5.0),

sys.γ => (1.0, 5.0),

sys.δ => (1.0, 5.0),

])

function f(x)

sol = simulate(experiment, prob, x)

[mean(sol[:x]), maximum(sol[:y])]

end

lb = lowerbound(prob)

ub = upperbound(prob)

param_range = [[l, u] for (l, u) in zip(lb, ub)]

m = gsa(f, Morris(total_num_trajectory=1000, num_trajectory=150), param_range)GlobalSensitivity.MorrisResult{Matrix{Float64}, Vector{Any}}([0.0674967779125595 -0.01875337837193961 -0.552608526650991 0.42033328337906056; 0.5294498058195454 -1.6501887783016627 0.9702568507816153 -0.61133203614181], [0.1964052197729609 0.031439244956289115 0.552608526650991 0.42033328337906056; 0.5367793140927956 1.651890469824202 0.9930839093194163 0.6250903123899291], [0.09747899490845878 0.002178039416766636 0.4850946869610934 0.11849813776472515; 0.2666131651388051 4.433329761464984 0.32096721229595876 0.20415152365425743], Any[[[0.3270790064900356, 0.2814877901265765], [0.1783645502818888, 0.624747721443014], [0.2371823538250679, 0.2970583259456666], [0.015723103604609278, 0.49796858279634626], [0.02389915249185963, 0.2990307836003682], [-0.0007710818463465502, 0.052876486329304966], [0.8260690376247808, 0.1855992234718609], [0.859774009471652, 0.12803237325497513], [-0.09185065371495428, 0.2294498877333859], [-0.06733782418134145, 0.31037684917371083] … [-0.1335734220308176, 0.08624742654283857], [0.2365096935400738, 0.2417206032178416], [0.09009241764100086, 0.1381249011790293], [-0.03446720522420075, 1.8792000789732901], [-0.035209880340899995, 1.8445960269032067], [-0.035209880340899995, 1.8445960269032067], [0.01762126948087558, 1.0428979374095206], [0.01762126948087558, 1.0428979374095206], [0.647291816410558, 0.35390555588956146], [0.647291816410558, 0.35390555588956146]], [[-0.007578291927358073, -0.5878950012645832], [-0.011634891350343688, -0.5094710160462451], [-0.012310578719248903, -0.47757472966123676], [-0.008191129254984168, -0.5980885436388768], [-0.005161111523670403, -0.5877744306779664], [-0.10983125780320098, -0.22092374617184377], [-0.10423357881850176, -0.3162309384448471], [-0.10423357881850176, -0.3162309384448471], [0.00635459778742488, -0.5094314519150619], [0.005660823061512018, -0.505669333780055] … [-0.040778089986677, -0.5315228296456149], [-0.04378787089389128, -1.0778380306592914], [-5.379688741842491e-5, -1.2363453768856867], [-0.017625292859198432, -5.380978944973611], [-0.05434988972434069, -6.2252088311068094], [-0.018053556422543436, -2.63294164787559], [-0.013487648456758854, -2.9572949304490312], [-0.013856754754582416, -2.9960885264584305], [-0.10874708240323089, -0.6287516474382172], [-0.10017766686433877, -0.633820269687339]], [[-0.3000811712232222, 0.7805619139799652], [-0.2754169770845685, 0.7041958319924954], [-0.2671463738564377, 0.7174157424116092], [-0.15502359469931484, 0.9523380254992789], [-0.41167291979795184, 0.6142646274478123], [-0.44537789164482305, 0.6718314776646981], [-0.44537789164482305, 0.6718314776646981], [-0.28873048492762676, 0.6641619995644102], [-0.20801858635005993, 0.6630661193216305], [-0.16759642132759361, 0.6901458287003208] … [-0.26579905650826063, 0.306315718170993], [-0.2843980125125195, 0.4725472804621243], [-0.17123979334501024, 1.492867765996798], [-0.0422736243374266, 0.9626569244443745], [-0.0893997632390917, 2.31832070353661], [-0.09899638661023188, 0.7723870229397973], [-0.7588320229444276, 0.9850058960141415], [-0.7483521430850064, 1.0823245269203907], [-0.724473989940791, 0.9693324199810603], [-0.7330434054796833, 0.9744010422301822]], [[0.6078700643305966, -0.47186566794322343], [0.6078700643305966, -0.47186566794322343], [0.4520958933015225, -0.07746505416080124], [0.6559643038180725, -0.2970856011129024], [0.2381319800030203, -0.3469376099602014], [0.16130449528966204, -0.3514575212618583], [0.13119843991614127, -0.36305589966056506], [0.13119843991614127, -0.36305589966056506], [0.18116545618672952, -0.5341704664983058], [0.16894943099167867, -0.3111963931407401] … [0.3179952021007555, -0.3630427315203688], [0.3772649171651384, -0.3568303076247639], [0.13318732173377607, -0.23644877894109337], [0.46588881733587195, -1.361929553143559], [0.48542474850510764, -0.4860537294185856], [0.44996119028234194, -0.4865960801301985], [0.43277252458643556, -0.454950142538419], [0.22466213686232372, -0.6105376405521193], [0.3045657107532542, -1.8529020076997111], [1.336478319747505, -0.5862203551966179]]])We can plot the results using

scatter(m.means[1,:], m.variances[1,:], series_annotations=[:α,:β,:y,:δ], color=:gray)and

scatter(m.means[2,:], m.variances[2,:], series_annotations=[:α,:β,:y,:δ], color=:gray)For the Sobol method, we can similarly do:

m = gsa(f, Sobol(), param_range, samples=1000)GlobalSensitivity.SobolResult{Matrix{Float64}, Nothing, Nothing, Nothing}([-0.0007610556019485068 -5.172065748930247e-5 0.5453148807603555 0.3543531104630022; 0.06427044003904768 0.5003201379643306 0.23565477474811505 0.06541847227042244], nothing, nothing, nothing, [0.006281496020733223 0.0034425589624241188 0.6315636453251169 0.4426772321690809; 0.1310223343698905 0.6194551043285271 0.2860933911031053 0.09421460245903523], nothing)See the documentation for GlobalSensitivity for more details.

- 1Derived from https://docs.sciml.ai/GlobalSensitivity/stable/tutorials/parallelized_gsa/ from from https://github.com/SciML/SciMLDocs, MIT licensed, see repository for details.